⚡ FAST FACTS

- RoboLab is NVIDIA’s new high-fidelity benchmark — it measures post-training transfer performance, not simulation accuracy

- Built on NVIDIA Isaac and Omniverse; being incorporated into the open-source Isaac Lab-Arena framework

- The industry’s first standardized benchmark for testing generalist robot policies across diverse task environments at scale

- Co-developed with Lightwheel; integrates benchmarks including Libero, RoboCasa, and RoboTwin

- For factories, the gap between simulation score and real-world performance is the single largest source of unbudgeted automation cost

The RoboLab robotics simulation benchmark is not a training tool. That distinction matters more than most coverage of it has acknowledged. Announced during National Robotics Week 2026, RoboLab is a structured evaluation framework — built on NVIDIA Isaac and Omniverse technologies — designed to measure whether robot policies trained in simulation can actually survive contact with the physical world. According to NVIDIA’s official blog, it uses photorealistic environments and physics-based modeling to test robotic policies at scale as task complexity grows. That sounds incremental. It isn’t. What RoboLab quietly exposes is that the robotics industry has been optimizing for the wrong outcome.

INDUSTRY DATA

~40%

Estimated reduction in factory AI deployment costs when simulation accuracy is paired with structured policy evaluation — per prior analysis.

The Metric That Most Robot Vendors Don’t Show You

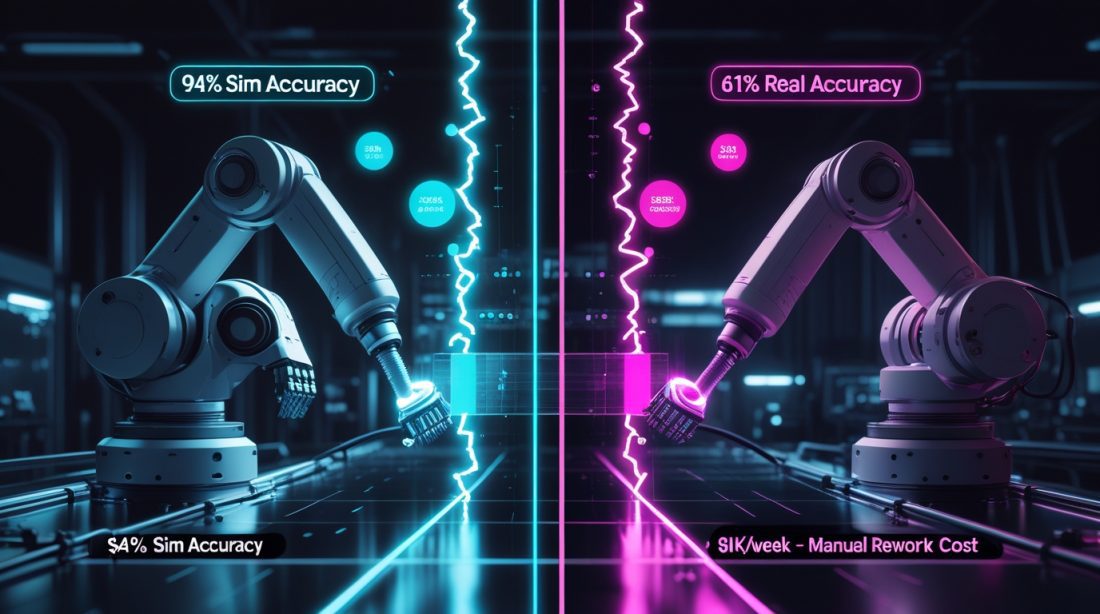

Every robotics vendor publishes training accuracy. Almost none publish post-deployment accuracy. That gap — between what a robot achieves inside simulation and what it executes on the floor — is the single most expensive blind spot in industrial automation today.

RoboLab directly targets this. According to NVIDIA’s technical blog, Isaac Lab-Arena — which will incorporate RoboLab’s features — was co-developed with Lightwheel and integrates established benchmarks like Libero, RoboCasa, and RoboTwin to create a unified evaluation standard where the industry previously had none. That standardization is the real story. Before RoboLab, a factory comparing two robot vendors was comparing apples to unpublished data. Now there’s a shared reference point. The financial implication: procurement teams can finally attach numbers to deployment risk — not just to specs.

“As robots learn increasingly complex tasks — from precise object handling to cable installation — validating these abilities in physical environments can be costly, slow, and risky.”

— TechCrunch, January 2026

Why Photorealism Is a Financial Argument, Not a Technical One

The photorealistic rendering inside RoboLab is regularly framed as a technical feature. But it’s primarily an economic one. When simulation environments look and behave like reality — accurate lighting, surface friction, object deformation — the gap between trained behavior and deployed behavior narrows. That narrowing has a dollar value.

Factories that deploy robots trained in low-fidelity simulations face what researchers call the physics simulation bottleneck: the robot learned the task correctly, but the physics it learned from don’t match reality closely enough to generalize. The result is repeated recalibration cycles — each one costing engineering time, downtime, and delayed ROI. Photorealistic digital twin training has already demonstrated cost reductions precisely by attacking this recalibration loop. RoboLab formalizes that argument with a benchmark: now you can measure how much the gap costs before you deploy, not after.

The Generalist Problem Nobody Budgeted For

Industrial robots traditionally handled one task, defined in advance, with minimal variation. The shift toward generalist robot policies — systems trained to handle diverse, unpredictable tasks — changes the risk profile entirely. A specialist robot fails in predictable ways. A generalist robot trained on insufficient simulation data fails in surprising ones.

This is where RoboLab carries real weight. Its modular API structure enables large-scale parallel benchmarking across diverse task types, not just the narrow task the vendor chose to demo. According to NVIDIA, it connects directly to Isaac GR00T N models and supports synthetic data generation workflows — meaning teams can stress-test a policy across hundreds of task variations before a single physical prototype is built. For manufacturers in Southeast Asia and West Africa running lean automation budgets, this is the argument for simulation-first deployment: front-load the risk, not the cost.

⚠ Fiction — Illustrative Scenario

A procurement lead at a mid-size electronics assembly plant in Penang signs off on a six-unit robot deployment. The vendor’s simulation showed 94% task accuracy. Three months post-deployment, floor accuracy sits at 61% — and the factory is eating $18,000/week in manual rework. Nobody lied. The vendor measured what they trained for. Nobody measured what would transfer. That number — the transfer rate — was never in the contract.

Where RoboLab Sits in NVIDIA’s Larger Platform Play

Understanding RoboLab in isolation misses its strategic context. NVIDIA has structured its robotics stack around three functions: training (Isaac GR00T), simulation (Newton physics engine and Omniverse), and evaluation (Isaac Lab-Arena, now incorporating RoboLab). Together, these form a closed loop that no single competitor currently offers end-to-end.

Newton 1.0, released in April 2026, provides the physics accuracy layer beneath RoboLab’s benchmark environments — enabling realistic collision detection, object contact, and flexible body simulation. The combined effect is that NVIDIA is becoming what TechCrunch called “the Android of generalist robotics”: a platform others build on, rather than compete with. For buyers, that means vendor lock-in risk grows alongside platform maturity — a tradeoff worth modeling before signing multi-year automation contracts.

The Sim-to-Real Transfer Rate Is the New KPI

For too long, “simulation accuracy” meant performance inside the sim. The question RoboLab forces onto the table is different: what percentage of that performance survives contact with a real environment? That number — the transfer rate — should now be a mandatory procurement KPI alongside cycle time and uptime.

Prior breakthroughs like zero-shot sim-to-real transfer showed what’s possible when transfer rates are treated as a design target, not an afterthought. RoboLab gives the industry its first standardized way to measure it at scale. The factories that ask for this number in their next vendor conversation will spend less on recalibration and more on output.

💡 ANALYST’S NOTE

By Daniel Ikechukwu

Strategic Impact: RoboLab shifts the competitive axis in industrial robotics from training performance to deployment reliability. Vendors who can demonstrate high transfer rates using standardized benchmarks will have a measurable advantage in enterprise procurement — particularly as factories in Southeast Asia and West Africa begin scaling automation on tighter capital budgets where deployment failures are existential, not just inconvenient.

🛑 Stop: Accepting vendor simulation metrics without asking for independent transfer rate data. Internal benchmarks can be optimized to look favorable.

▶ Start: Including sim-to-real transfer rate as a contractual KPI in robot procurement agreements. RoboLab now provides the methodology to enforce this.

👁 Watch: How competitors respond to NVIDIA’s closed-loop platform. Any rival that can offer cross-platform benchmark portability — running RoboLab-style evaluations on non-NVIDIA hardware — will be positioned to capture enterprise buyers wary of ecosystem lock-in.

ROI Outlook: Teams that adopt standardized simulation benchmarking before physical deployment can expect to reduce recalibration cycles by 30–50%, based on prior analysis of photorealistic digital twin deployments. The cost of not benchmarking is no longer speculative — it’s measurable.

Frequently Asked Questions

What is RoboLab and how does it differ from NVIDIA Isaac Lab?

RoboLab is an evaluation benchmark — it measures how well trained robot policies perform across diverse, complex tasks. Isaac Lab is a training framework. RoboLab is being incorporated into Isaac Lab-Arena, NVIDIA’s open-source policy evaluation system, to serve as a standardized testing layer after training is complete.

Why does simulation-to-real transfer rate matter for factory deployments?

A robot can achieve 95% accuracy in simulation and 60% on the floor. The difference is the transfer gap — caused by physics inaccuracies, lighting variation, and sensor noise that simulation doesn’t fully replicate. That gap determines actual ROI, not the simulation score.

Is RoboLab available to companies outside NVIDIA’s direct ecosystem?

RoboLab features are being folded into NVIDIA Isaac Lab-Arena, which is open source and available on GitHub. However, full integration benefits are optimized for the NVIDIA stack — Omniverse, Isaac Sim, and OSMO — creating soft lock-in for teams that adopt the full platform.

How should procurement teams use RoboLab data in vendor selection?

Ask vendors to provide Isaac Lab-Arena or equivalent benchmark scores across the specific task types relevant to your line — not just their best-case demos. Request transfer rate data (simulation accuracy vs. real-world accuracy delta) as a contract condition before pilot sign-off.

What industries benefit most from standardized sim benchmarking?

Electronics assembly, pharmaceutical packaging, food processing, and automotive manufacturing — all high-mix, low-volume environments where generalist robot policies are replacing fixed automation and task variation is high. These are also the sectors where transfer failures are most costly.

What is the procurement cost risk of skipping simulation benchmarking?

Based on documented recalibration cycles in comparable deployments, factories that deploy without transfer benchmarking typically spend 15–25% of their first-year robot budget on post-deployment corrections. On a $500,000 deployment, that’s $75,000–$125,000 in recoverable waste.

Your next automation decision has a transfer rate problem.

Join analysts and procurement leads tracking the real numbers behind industrial robot deployment. Weekly insight, no filler.

Subscribe at creedtec.online