TL;DR — Read This First

What This Article Covers

- The physics simulation bottleneck — not hardware — is the primary barrier to deploying general-purpose robots at scale in 2026

- The “reality gap” refers to the collection of discrepancies between simulated and real-world physics that cause trained robot policies to fail on deployment

- NVIDIA’s Jensen Huang confirmed at CES 2026 that real-world data collection is “slow, costly, and never enough” — making simulation the only viable path to scale

- 5 specific failure points — contact dynamics, sensor fidelity, actuator modeling, material deformation, and environmental unpredictability — drive the gap

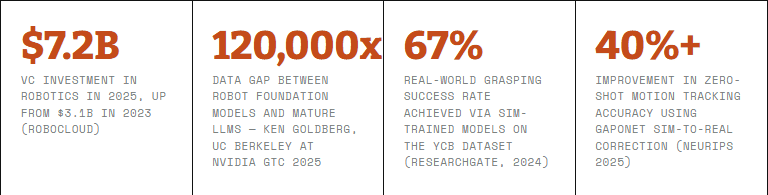

- VCs invested $7.2 billion in robotics in 2025, up from $3.1 billion in 2023 — the financial pressure to solve this is accelerating

- NVIDIA Newton, MuJoCo, and emerging differentiable simulators are the leading technical responses — each with distinct tradeoffs

The physics simulation bottleneck in robotics is the problem nobody in the industry wants to lead with — because it exposes the distance between what robots can do in a lab demo and what they can actually do in a warehouse, a hospital corridor, or an unstructured factory floor.

Here is the core tension: simulation is the only practical way to generate the volume of training data that general-purpose robots require. Real-world data collection is, as NVIDIA CEO Jensen Huang stated directly at CES 2026, “slow, costly, and never enough.” A robot that needs to learn how to pick up 10,000 different objects in 10,000 different orientations cannot acquire that experience through physical trials. It needs simulation. But the moment simulation becomes the primary training environment, the quality of that simulation’s physics engine determines everything.

And right now, no simulator is accurate enough to fully close what researchers call the reality gap — the collection of discrepancies between how physics behaves in a mathematical model versus how it behaves in the real world.

This matters beyond robotics research. The humanoid robot market is projected to reach $6.24 billion in 2026 — capital that is being deployed against a problem that simulation accuracy has not fully solved. Understanding where the bottleneck actually sits, and who is making real progress on it, is the difference between an informed deployment decision and an expensive mistake.

Why Physics Simulation — Not Hardware — Is the Real Constraint on General-Purpose Robots

The framing that dominates most robotics coverage focuses on hardware: battery life, actuator strength, sensor arrays, compute at the edge. These are real constraints. But they are downstream of a more fundamental problem.

A robot’s physical capability means very little if the policy governing its behavior — the learned decision-making logic trained in simulation — breaks down the moment it encounters real friction, real contact forces, real sensor noise. The hardware can be perfect. If the simulation it trained in was not physically accurate, the policy is fragile.

As a 2025 survey published in the 2026 Annual Review of Control, Robotics, and Autonomous Systems put it plainly: “There are no perfect simulators, and therefore there is always a difference from the real world.” The question is how large that difference is, and how many real-world tasks it prevents.

“The physical world is diverse and unpredictable. Collecting real-world training data is slow and costly and it’s never enough.”— Jensen Huang, NVIDIA CEO, CES 2026 Keynote

Huang’s answer to this, presented at CES 2026, was NVIDIA Cosmos — a world foundation model designed to generate physically plausible synthetic scenarios at scale. His framing: “Cosmos turns compute into data.” That is not a marketing line. It is a statement about where the constraint actually lives. If data were easy to collect in the real world, Cosmos would not need to exist.

Why the Reality Gap Exists: 5 Physics Simulation Bottlenecks Explained

The reality gap is not a single problem. It is a collection of sub-gaps, each arising from a different place where mathematical approximation diverges from physical reality. According to the comprehensive reality gap survey by researchers at the University of Zurich and MIT, these are the five most consequential failure points:

Bottleneck 01

Contact Dynamics — The Friction Problem

Real-world contact interactions between a robot’s end-effector and an object are nonlinear, complex, and highly sensitive to surface properties. Materials deform under pressure. Friction coefficients shift as relative velocity changes. Contact states alternate between sticking, slipping, and separation in fractions of a second.

Simulators handle this by using simplified models — point contacts, linearized friction cones, spring-damper systems. These approximations make computation feasible but introduce physical artifacts: unstable grasps, spurious internal forces, unrealistic motion patterns. For manipulation tasks — picking up a glass, threading a cable, assembling components — these artifacts are not minor. They are the difference between a working policy and a failed deployment.

Real-world consequence

A robot trained to grasp objects using simplified contact physics in simulation will encounter a different friction landscape on the factory floor. Policies that worked flawlessly in simulation slip, drop, or apply incorrect force in physical operation.

Bottleneck 02

Sensor Fidelity — What the Robot Sees Versus What’s There

Simulated sensors — cameras, LiDAR, depth sensors, proprioceptive feedback — produce clean, idealized data. Real sensors produce noise. Camera images contain motion blur, lens distortion, changing light conditions, and reflective surfaces that simulation rarely replicates accurately. Depth sensors return missing data points on translucent or shiny objects. IMU readings carry drift.

When a robot’s perception system is trained on pristine simulated sensor data and then deployed against messy real-world input, the policy’s confidence estimates break down. It encounters inputs it was never trained to handle. This is particularly acute for vision-based manipulation, where the policy must infer object pose, material, and state from what the camera sees.

Real-world consequence

Vision-based robot policies trained in simulation commonly fail on real-world objects with unusual textures, reflective surfaces, or in variable lighting conditions — environments that are standard in actual manufacturing and logistics settings.

Bottleneck 03

Actuator Modeling — The Motor Delay Mismatch

Simulators model joints as ideal — instant response, perfect torque delivery, no internal friction or backlash. Real actuators have motor delays, internal springs, damping, mechanical backlash, and thermal behavior that changes the effective torque at each joint over time. These are the kinds of details that are “rarely modeled” in standard robot simulators, as the Annual Review researchers noted.

The consequence is that locomotion policies — walking, running, climbing — that appear stable in simulation exhibit oscillations, instability, or over-correction in physical hardware. The robot’s control loop is tuned against a joint model that does not match its actual hardware.

Real-world consequence

Legged robots trained in simulation frequently exhibit gait instability or energy inefficiency on physical deployment because their joint dynamics were approximated away during training. Correcting this typically requires expensive iterative hardware trials.

Bottleneck 04

Rigid Body Assumptions — The Deformable World Problem

Standard robot simulators assume perfectly rigid bodies. Real objects are not rigid. Foam compresses. Fabric folds. Fruit bruises. Even robot links flex slightly under load. A policy trained with rigid-body assumptions encounters deformable, compliant objects in the real world and has no learned framework for predicting how they will respond to applied force.

This is why tasks that seem trivial — picking up a soft package, folding a cloth, handling food items — remain unsolved at scale despite years of research. The simulation trained on rigid approximations simply did not teach the robot what it needed to know.

Real-world consequence

Logistics and food handling robots frequently struggle with deformable items precisely because their training simulations treated all objects as rigid. This limits deployment to standardized, predictable items — the opposite of a general-purpose system.

Bottleneck 05

Environmental Unpredictability — What No Simulation Fully Captures

Real environments change in ways that are computationally impractical to simulate comprehensively. Air currents affect grasps. Temperature changes alter material properties. Other humans and machines introduce dynamic occlusions and unexpected trajectories. Floor surfaces vary in friction across the same facility depending on cleaning cycles and foot traffic.

Simulation worlds tend toward controlled, static environments. The real world is neither. Human-in-the-loop workflows remain a critical bridge precisely because this environmental unpredictability still exceeds what simulation can train robots to handle autonomously.

Real-world consequence

Robots deployed in variable environments — outdoor logistics, construction sites, emergency response — show the steepest performance degradation from simulation to deployment. The gap between controlled test environments and actual operating conditions is where most deployments stall.

Field Note — Fiction A robotics engineer I’ll call Marcus spent eight months training a manipulation policy for a packaging line. In simulation, it worked. Every time. Thousands of iterations, clean success rates, the kind of numbers that justify a product demo. On deployment day, the robot picked up the first package, applied the exact force its policy had learned, and crushed it. Not dramatically — just enough to fail the quality check. Then the second. Then the third. The issue wasn’t the model. The simulation had used a rigid-body assumption for the packages. The actual packages were slightly softer than spec. Eight months of training, invalidated by a single physical property that the simulator had never modeled. Marcus told me afterward: “We didn’t have a robot problem. We had a physics problem.”— CreedTec Analyst, Illustrative Account Based on Documented Industry Patterns

Why the Industry Is Responding Now — and What’s Actually Being Built

The financial pressure to solve the physics simulation bottleneck has reached a tipping point. VCs put $7.2 billion into robotics in 2025 — more than double the 2023 figure. That capital is betting on general-purpose deployment. The simulation gap is the primary technical risk to those bets paying off.

Three serious technical responses have emerged:

| Platform | Approach | Key Advantage | Limitation |

|---|---|---|---|

| NVIDIA Newton + Isaac Sim | GPU-accelerated physics engine, co-developed with Google DeepMind and Disney Research | Simulates complex actions — walking through gravel, handling deformable fruit — at scale | Requires NVIDIA hardware infrastructure; ecosystem lock-in |

| MuJoCo (Google DeepMind) | Superior contact dynamics and joint modeling; GPU-accelerated via MJX on JAX | Physics accuracy for manipulation; zero-shot sim-to-real across multiple robot types demonstrated | Sensor plugin support less mature than Gazebo or Isaac Sim as of early 2026 |

| Differentiable Simulators | Simulators that can be optimized via gradient descent — the simulation learns to match the real world | Closes the gap dynamically rather than statically; adapts to real-world measurements | Computationally intensive; still primarily research-stage for most applications |

NVIDIA’s Newton physics engine, announced in beta and built into Isaac Lab, represents the most commercially significant push to date. Its flexible design allows simulation of extremely complex robot actions — walking through snow or gravel, handling soft objects — that standard rigid-body simulators could not approach. Adopters already include ETH Zurich, Technical University of Munich, Boston Dynamics, Figure AI, and Franka Robotics.

At the same time, the research community’s 2026 consensus is that no single simulator solves the problem completely. Domain randomization — deliberately varying physical parameters during training so the policy learns robustness rather than precision — remains one of the most practically effective techniques, even if it is not elegant.

📌 What Domain Randomization Actually Means

Rather than training on one exact physical model, domain randomization trains on thousands of slightly different models simultaneously — varying friction coefficients, mass distributions, sensor noise levels. The policy that emerges is less optimized for any single simulation but more robust when transferred to the real world. It’s a workaround for an unsolved problem, and it works.

Why This Matters Beyond the Lab — The Financial Logic of Solving Simulation

The physics simulation bottleneck is not an academic problem. It has a direct financial cost that flows through to every organization deploying or evaluating robotics technology in 2026.

Every failed sim-to-real transfer represents wasted training compute, wasted deployment engineering time, and — in the worst cases — physical damage to hardware or product. Asset tracking and digital passport systems are increasingly being deployed precisely to capture this failure data at scale, turning deployment failures into structured feedback that improves simulation accuracy over time.

The organizations that invest now in understanding which simulation platforms match their specific deployment environments — and which physical scenarios their chosen simulator handles poorly — will spend less on failed deployments. That is a defensible ROI argument that finance teams can evaluate directly.

The broader market signal is clear. According to Ken Goldberg of UC Berkeley, the data gap between robot foundation models and mature language models could be as large as 120,000x. Closing that gap through real-world data collection alone is not economically viable. Simulation must improve. The companies building better physics engines are not serving academics — they are building the infrastructure that general-purpose robotics depends on.

Frequently Asked Questions

What is the physics simulation bottleneck in robotics?

The physics simulation bottleneck refers to the gap between how robots behave inside a simulator versus how they perform in the real world. Simulators simplify complex physical phenomena — friction, contact dynamics, material deformation — in ways that cause trained robot policies to fail or degrade when transferred to physical hardware. This “reality gap” is the primary obstacle preventing general-purpose robots from scaling into unstructured real-world environments.

Why is sim-to-real transfer so difficult for general-purpose robots?

Sim-to-real transfer fails because physical reality is far more complex than any mathematical model can capture at practical compute speeds. Key failure points include inaccurate contact physics, sensor noise discrepancies, actuator delay mismatches, and the inability to model real-world material deformation. A robot trained with rigid-body assumptions in simulation encounters deformable, unpredictable objects in the real world and the policy breaks down.

What companies are solving the physics simulation gap?

NVIDIA is leading commercially with its Newton physics engine — co-developed with Google DeepMind and Disney Research — built into Isaac Sim and Isaac Lab. MuJoCo, backed by Google DeepMind, offers strong physics accuracy for manipulation tasks. Gazebo remains widely adopted for general-purpose robotics. Research institutions are advancing differentiable simulators that adapt dynamically to real-world measurements.

What is domain randomization and does it work?

Domain randomization is the practice of training robot policies across thousands of slightly varied physical environments simultaneously — randomizing friction, mass, sensor noise — so the resulting policy is robust to real-world variation rather than perfectly tuned to one simulated environment. It is one of the most practically effective current approaches to bridging the sim-to-real gap, though it is a workaround rather than a fundamental solution.

How does the physics simulation problem affect organizations deploying robots today?

Every failed sim-to-real transfer represents wasted training compute, engineering time, and — in physical deployments — potential damage to hardware or product. Organizations that understand which simulation platforms match their specific deployment environments, and which physical scenarios their simulator handles poorly, make significantly fewer expensive deployment errors.

The Bottleneck Will Be Solved — The Question Is Who Solves It First

General-purpose robotics is not waiting on a hardware breakthrough. The actuators exist. The compute exists. The sensors exist. What does not yet fully exist is a simulation environment accurate enough to train policies that transfer reliably to the unstructured, deformable, noisy, unpredictable real world.

That gap is closing. NVIDIA’s investment in Newton, DeepMind’s continued development of MuJoCo, and the research community’s accelerating work on differentiable simulation are all pointed at the same problem. The 2026 Annual Review of Control, Robotics, and Autonomous Systems framing it as the “most critical and long-standing challenge in robotics” reflects genuine consensus, not academic conservatism.

For operators, the practical implication is this: the simulation platform you choose for training today determines what your robot can reliably do tomorrow. Not choosing is also a choice — it just happens to be an expensive one.

The physics have to come first. Everything else in general-purpose robotics depends on getting them right.

Stay Ahead of the Simulation Curve

Weekly analysis on robotics training environments, sim-to-real transfer research, and the technical decisions shaping general-purpose robot deployment — written for operators who need to understand the stack, not just the specs.

Subscribe for Weekly Insights →